AI Strategy & Innovation Design Workshop

These workshops help your business re-design workflows around how work should be done with Agentic AI, develop your AI strategy with measurable organisational value creation, and with a focus on Execution, Governance and Change.

Workshop Outcomes- Discovery & Alignement of AI Strategic Objectives

- Identification of High-Value Agentic AI Use Cases across the Organization

- Evaluate challenges and opportunities associated with adopting and operationalizing agentic AI workflows

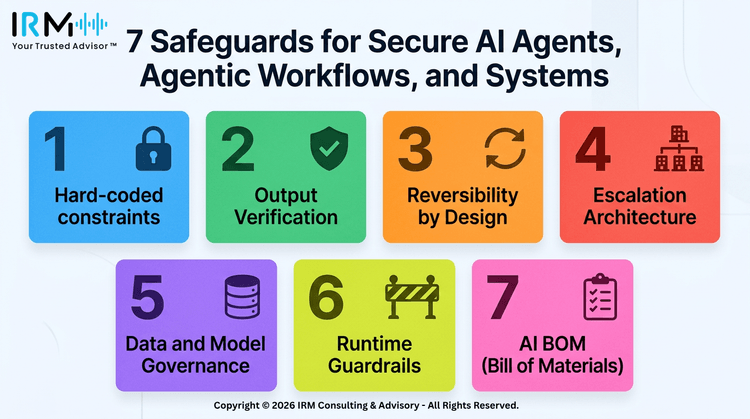

- Identification of governance, data, security, and regulatory risks

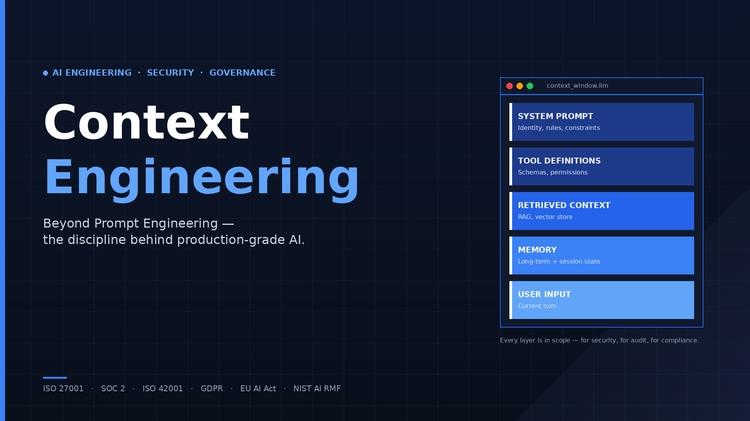

- "Agentic AI Workflow Architecture concepts aligned with AI Governance Frameworks"

- Agentic AI Playbook, Implementation Plan and Roadmap